Run web, mobile, or API tests in parallel

Use the ParallelRunner CLI tool to run multiple UFT One tests in parallel. You can run GUI tests on mobile devices and web browsers, and you can also run API tests. The ParallelRunner also supports API tests called by GUI tests, and vice versa.

Running API tests using the ParallelRunner is supported on UFT One versions 15.0.2 and later

Supported environments

Parallel web testing is supported on specific browsers, for controls supported by the Web Add-in. For the list of supported browsers, see the list of browser parameter values.

Parallel testing on mobile devices is supported when using UFT Mobile and not when testing on local devices.

Before starting parallel testing

Before starting parallel testing, do the following:

| Licenses |

Ensure that your licenses support your use cases. Mobile tests: Each license, seat or concurrent, supports running up to four tests in parallel. Non-mobile tests: Running tests in parallel requires a concurrent license server. Each test run consumes a single concurrent license. |

| Access to ParallelRunner port | Make sure that the firewall on the UFT One computer does not block the port used by the ParallelRunner (50051 by default). |

| Mobile web testing |

If you are testing mobile web browsers, ensure that the correct browser is selected in the Record and Run Settings, on the Mobile tab. For more details, see Define settings to test mobile apps. |

| Web testing |

Keep the following in mind: If you specify web browsers in the ParallelRunner command, these browsers are launched before running the test. This overrides the settings defined in the Record and Run Settings, and any browser parameterization configured in the test. This means:

|

Run tests in parallel

The ParallelRunner tool is located in the <UFT One installation folder>/bin directory.

Do the following:

-

Close UFT One. ParallelRunner and UFT One cannot run at the same time.

-

Run your tests in parallel from the command line, defining your test and environment details using the following methods:

-

Define test, environments, and parameters using command line options

-

Define tests, environments, and parameters using a .json file

If you use a .json file, you can synchronize and control your parallel run by creating dependencies between test runs and controlling the start time of the runs. For details, see Use conditions to synchronize your parallel run.

-

Tests run using ParallelRunner are run in Fast mode. For details, see Fast mode.

For more details, see:

Define test, environments, and parameters using command line options

When running tests from the command line, run each test separately with individual commands.

For GUI tests, each command runs the test multiple times if you provide different alternatives to run. You can provide alternatives for the following:

- Browsers

- Devices

- Data tables

- Test parameters

To run tests from the command line, use the syntaxes defined in the sections below. Use semicolons (;) to separate the alternatives.

Parallel runner runs the test as many times as necessary, to test all combinations of the alternatives you provide.

For example:

Run the test three times, one for each browser:

ParallelRunner -t "C:\GUIWebTest" -b "Chrome;IE;Firefox"

Run the test four times, one for each data table on each browser:

ParallelRunner -t "C:\GUIWebTest" -b "Chrome;Firefox" -dt ".\Datatable1.xlsx;.\Datatable2.xlsx"

Run the test 8 times, testing on each browser, using each data table with each set of test parameters.

ParallelRunner -t "C:\GUIWebTest" -b "Chrome;Firefox" -dt ".\Datatable1.xlsx;.\Datatable2.xlsx" -tp "param1:value1, param2:value2; param1:value3, param2:value4"

Tip: Alternatively, reference a .json file with multiple tests configured in a single file. In this file, separately configure each test run. For details, see Define tests, environments, and parameters using a .json file.

From the command line, run each web test using the following syntax:

For example:

ParallelRunner -t "C:\GUIWebTest_Demo" -b "Chrome;IE;Firefox" -r "C:\parallelexecution"

If you specify multiple browsers, the test is run multiple times, one run on each browser.

Mobile test command line syntax

From the command line, run each mobile test using the following syntax:

For example:

ParallelRunner -t "C:\GUITest_Demo" -d "deviceID:HT69K0201987;manufacturer:samsung,osType:Android,osVersion:>6.0;manufacturer:motorola,model:Nexus 6" -r "C:\parallelexecution"

If you specify multiple devices, the test is run multiple times, one run on each device.

Wait for an available device

You can instruct the ParallelRunner to wait for an appropriate device to become available instead of failing a test run immediately if no device is available. To do this, add the -we option to your ParallelRunner command.

From the command line, run each API test using the following syntax:

For example:

ParallelRunner -t "C:\APITest" -r "C:\API_TestResults"

Use the following options when running tests directly from the command line.

-

Option names are not case sensitive.

-

Use the a dash (

-) followed by the option abbreviation, or a double-dash (--) followed by the option's full name.

|

|

String. Required. Defines the path to the folder containing the UFT One test you want to run. If your path contains spaces, you must surround the path with double quotes (""). For example: -t ".\GUITest Demo" Multiple tests are not supported in the same path. |

-b

--browsers

|

String. Optional. Supported for web tests. Define multiple browsers using semicolons (;). ParallelRunner runs the test multiple times, to test on all specified browsers. Supported values include:

Notes:

|

|

|

String. Optional. Supported for mobile tests. Define multiple devices using semicolons (;). ParallelRunner runs the test multiple times, to test on all specified devices. Define devices using a specific device ID, or find devices by capabilities, using the following attributes in this string:

Note: Do not add extra spaces around the commas, colons, or semicolons in this string. We recommend always surrounding the string in double quotes ("") to support any special characters. For example: –d "manufacturer:apple,osType:iOS,osVersion:>=11.0" |

|

|

String. Optional. Supported for web or mobile tests. Defines the path to the data table you want to use for this test. UFT One uses the table you provide instead of the data table saved with the test. Define multiple data tables using semicolons (;). ParallelRunner runs the test multiple times, to test with all specified data tables. If your path contains spaces, you must surround the path with double quotes (""). For example: -dt ".\Data Tables\Datatable1.xlsx;.\Data Tables\Datatable2.xlsx". |

-o

|

String. Optional. Determines the result output shown in the console during and after the test run. Supported values include:

|

-r

|

String. Optional. Defines the path where you want to save your test run results. By default, run results are saved at %Temp%\TempResults. If your string contains spaces, you must surround the string with double quotes (""). For example: - r "%Temp%\Temp Results" |

-rl

|

Optional. Include in your command to automatically open the run results when the test run starts. Use the -rr--report-auto-refresh option to determine how often it's refreshed. For more details, see Sample ParallelRunner response and run results. |

-rn

|

String. Optional. Defines the report name, which is displayed in the browser tab when you open the parallel run's summary report. By default, the name is Parallel Report. Customize this name to help distinguish between runs when you open multiple report at once. UFT One also adds a timestamp to each report name for this purpose. If your string contains spaces, you must surround the string with double quotes (""). For example: - rn "My Report Title" |

-rr

|

Optional. Determines how often an open run results file is automatically refreshed, in seconds. For example: -rr 5 Default, if no integer is presented: 5 seconds. |

|

--testParameters

|

String. Optional. Supported for web and mobile tests. Defines the values to use for the test parameters. Provide a comma-separated list of parameter name-value pairs. For test parameters that you do not specify, UFT One uses the default values defined in the test itself. For example: -tp "param1:value1,param2:value2" Define multiple test parameter sets using semicolons (;). ParallelRunner runs the test multiple times, to test with all specified test parameter sets. For example: -tp "param1:value1, param2:value2; param1:value3, param2:value4" If your parameter value includes commas (,), semicolons (;), or colons (:), surround the value with a backslash followed by a double quote (\"). For example, if the value of TimeParam is 10:00;00, use: -tp "TimeParam:\"10:00;00\"" Note: You cannot include double quotes (") inside a parameter value using the command line options. Instead, use a JSON configuration file for your ParallelRunner command. |

-we

|

Optional. Supported for mobile tests. Include in your command to instruct each mobile test run to wait for an appropriate device to become available instead of failing immediately if no device is available. |

Define tests, environments, and parameters using a .json file

Run ParallelRunner and reference a .json configuration file directly from the command line.

In the .json file, you can:

- Specify multiple tests to run in parallel.

- Define multiple environments and parameters to use for multiple runs.

To run the ParallelRunner with a .json configuration file:

-

In a .json file, add separate lines for each test run. Use the .json options to describe the environment and parameters to use for each run.

-

Reference the .json file in the command line using the following command line option:

-cString. Defines the path to a .json configuration file.

Use the following syntax:

ParallelRunner -c <.json file path>

For example:

ParallelRunner -c parallelexecution.json

-

Add additional command line options to configure your run results. For details, see -o, -rl and -rr.

These are the only command line options you can use together with a .json configuration file. Any other command line options are ignored.

Sample .json configuration file

The following is a sample .json configuration file syntax. In this file, the last test is run on both a local desktop browser and a mobile device. See also Sample .json configuration file with synchronization.

{

"reportPath": "C:\\parallelexecution",

"reportName":"My Report Title",

"waitForEnvironment":true,

"parallelRuns": [

{

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing",

"dataTable": "C:\\Data Tables\\Datatable1.xls",

"testParameter":{

"para1":"val1",

"para2":"val2"

}

"reportSuffix": "Samsung_OS_above_6",

"env": {

"mobile": {

"device": {

"manufacturer": "samsung",

"osVersion": ">6.0",

"osType": "Android"

}

}

}

},

{

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing",

"dataTable: "C:\\Data Tables\\Datatable2.xls",

"testParameter":{

"para1":"val3",

"para2":"val4"

}

"reportSuffix": "Moto_Nexus_6_OS_equals_7",

"env": {

"mobile": {

"device": {

"manufacturer": "motorola",

"model": "Nexus 6",

"osVersion": "7.0",

"osType": "Android"

}

}

}

},

{

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing",

"reportSuffix": "ID_HT69K0201987",

"env": {

"mobile": {

"device": {

"deviceID": "HT69K0201987"

}

}

}

},

{

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing",

"reportSuffix": "Web_Chrome",

"env": {

"web": {

"browser": "Chrome"

}

}

} ,

{

"test": "C:\\Users\\qtp\\Documents\\API Test",

"reportSuffix": "API"

} ,

{

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing",

"dataTable": "C:\\Data Tables\\Datatable3.xls",

"reportSuffix": "Mobile_Web",

"env": {

"web": {

"browser": "Chrome"

},

"mobile": {

"device": {

"manufacturer": "motorola",

"model": "Nexus 6",

"osVersion": "7.0",

"osType": "Android"

}

}

}

}

]

}

Option reference for a .json configuration file

Define the following options in a .json configuration file to reference from the command line (case sensitive):

| reportPath |

String. Defines the path where you want to save your test run results. By default, run results are saved at %Temp%\TempResults. |

| reportName |

String. Defines the report name, which is displayed in the browser tab when you open the parallel run's summary report. By default, the report name is the same as the .json file name. Customize this name to help distinguish between runs when you open multiple report at once. UFT One also adds a timestamp to each report name for this purpose. |

| test |

String. Defines the path to the folder containing the UFT One test you want to run. |

| reportSuffix |

String. Defines a suffix to append to the end of your run results file name. For example, you may want to add a suffix that indicates the environment on which the test ran. Example: Default: "[N]". This indicates the index number of the current result instance, if there are multiple results for the same test in the same parallel run. Notes:

|

| browser |

String. Defines the browser to use when running the web test. Supported values include:

Notes:

|

| deviceID |

String. Defines a device ID for a specific device in the UFT Mobile device lab. Note: When both deviceID and device capability attributes are provided, the device capability attributes will be ignored. |

| manufacturer |

String. Defines a device manufacturer. |

| model |

String. Defines a device model. |

| osVersion |

String. Defines a device operating system version. One of the following:

|

| osType |

Defines a device operating system. One of the following:

|

|

String. Supported for web and mobile tests. Defines the path to the data table you want to use for this test run. UFT One uses the table you provide instead of the data table saved with the test. Place the dataTable definition in the section that describes the relevant test run. |

|

| testParameter |

String. Supported for web and mobile tests. Defines the values to use for the test parameters in this test run. Provide a comma-separated list of parameter name-value pairs. For example: "testParameter":{

"para1":"val1",

"para2":"val2"

}

Place the testParameter definition in the section that describes the relevant test run. If your parameter value includes a backslash (\) or double quote ("), add a backslash before the character. For example: "para1":"val\"1" assigns the value val"1 to the parameter para1. For test parameters that you do not specify, UFT One uses the default values defined in the test itself. |

| waitForEnvironment |

Set this option to |

Use conditions to synchronize your parallel run

To synchronize and control your parallel run, you can create dependencies between test runs and control the start time of the runs. (Supported only when using a .json file in the ParallelRunner command).

Using conditions, a test run can do one or more of the following:

- Wait for a number of seconds before beginning to run.

- Run after a different test runs.

- Begin only after a different test runs and reaches a specific status.

For each test run defined in the .json file, create conditions that control when the run starts. Build the conditions under the test section, using the elements described below.

You can create the following types of conditions:

- Simple conditions, which contain one or more wait statements.

- Combined conditions, which contain multiple conditions and an AND/OR operator, which specifies whether all conditions must be met.

Sample .json configuration file with synchronization

The following is a sample .json configuration file that provides a synchronized parallel run:

{

"reportPath": "C:\\parallelexecution",

"reportName": "My Report Title",

"waitForEnvironment": true,

"parallelRuns": [{

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing\\GUITest_Mobile",

"testRunName": "1",

"condition": {

"operator": "AND",

"conditions": [{

"waitSeconds": 5

}, {

"operator": "OR",

"conditions": [{

"waitSeconds": 10,

"waitForTestRun": "2"

}, {

"waitForTestRun": "3",

"waitSeconds": 10,

"waitForRunStatuses": ["failed", "warning", "canceled"]

}

]

}

]

},

"env": {

"mobile": {

"device": {

"osType": "IOS"

}

}

}

}, {

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing\\GUITest_Mobile",

"testRunName": "2",

"condition": {

"waitForTestRun": "3",

"waitSeconds": 10

},

"env": {

"mobile": {

"device": {

"osType": "IOS"

}

}

}

}, {

"test": "C:\\Users\\qtp\\Documents\\Unified Functional Testing\\GUITest_Mobile",

"testRunName": "3",

"env": {

"mobile": {

"device": {

"deviceID": "63010fd84e136ce154ce466d79a2ebd357aa5ce2"

}

}

}

}]

}

When running ParallelRunner with this .json file, the following happens:

-

Test run 3 waits for device 63010fd84e136ce154ce466d79a2ebd357aa5ce2.

-

Test run 2 waits 10 seconds after test run 3 ends, and then waits for an available IOS device.

-

Test run 1 first waits for 5 seconds after the ParallelRunner command is run.

Then it waits 10 seconds more after run 2 ends or run 3 reaches a status of failed, warning, or canceled.

Then it waits until an IOS device is available.

Run steps in isolated mode

When running multiple tests in parallel that have related steps that interact with the same objects, the steps may interfere with each other and cause errors in your parallel test run.

To prevent errors, configure those steps to run in isolated mode, protected from interference from other tests running in parallel.

Surround the steps you want to run in parallel with the following utility steps:

- ParallelUtil.StartIsolatedExecution

- ParallelUtil.StopIsolatedExecution

For example:

ParallelUtil.StartIsolatedExecution

Browser("Advantage Shopping").Page("Google").WebEdit("Search").Click

Dim mySendKeys

set mySendKeys = CreateObject("WScript.shell")

mySendKeys.SendKeys("Values")

ParallelUtil.StopIsolatedExecution

For more details, see the ParallelUtil object reference in the UFT One Object Model Reference.

Stop the parallel execution

To quit the test runs from the command line, press CTRL+C on the keyboard.

-

Any test with a status of Running or Pending, indicating that it has not yet finished, will stop immediately.

-

No run results are generated for these canceled tests.

Sample ParallelRunner response and run results

The following code shows a sample response displayed in the command line after running tests using ParallelRunner.

>ParallelRunner -t ".\GUITest_Demo" -d "manufacturer:samsung,osType:Android,osVersion:Any;deviceID:N2F4C15923006550" -r "C:\parallelexecution" -rn "My Report Name"

Report Folder: C:\parallelexecution\Res1

ProcessId, Test, Report, Status

3328, GUITest_Demo, GUITest_Demo_[1], Running -

3348, GUITest_Demo, GUITest_Demo_[2], Pass

In this example, when the run is complete, the following result files are saved in the C:\parallelexecution\Res1 directory:

- GUITest_Demo_[1]

- GUITest_Demo_[2]

The parallelrun_results.html file shows results for all tests run in parallel. The report name in the browser tab is My Report Name.

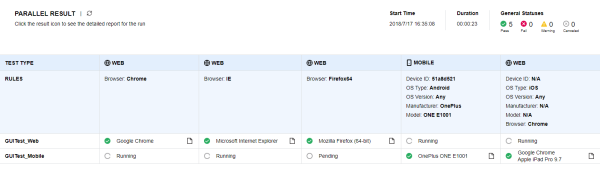

The first row in the table indicates any rules defined by the ParallelRunner commands, and subsequent rows indicate the result and the actual environment used during the test.

Tip: For each test run, click the HTML report  icon to open the specific run results. This is supported only when UFT One is configured to generate HTML run results.

icon to open the specific run results. This is supported only when UFT One is configured to generate HTML run results.

For example:

Note: The report provides a separate column for each browser name, using a case-sensitive comparison for browser names. Therefore, separate columns would be provided for IE and ie.

Modify the ParallelRunner port

ParallelRunner uses the port configured in the parallel.ini file located in the <UFT One installation folder>/bin> directory. The default port is 50051.

If the port configured there is already in use elsewhere, modify it by doing one of the following:

-

Open the <UFT One installation folder>/bin/parallel.ini file for editing, and modify the port number configured.

-

If you have permission issues editing this file, create a new file named parallel.custom.ini in the %ProgramData%\UFT directory.

Add the following code:

[Mediator]

Port=<port number>The parallel.custom.ini file overrides any configuration in the parallel.ini file.

See also:

See also: