Mobile, web, and windows-based SAP GUI tests

This topic discusses some of the elements that UFT One's Artificial Intelligence (AI) Object Detection uses to support unique identification of objects in your application.

Associate text with objects

The text associated with an object can help identify an object uniquely. For example:

AIUtil("input", "USER NAME").Type "admin"Using the USER NAME text in this step to describe the object, ensures that we type in the correct field.

When detecting objects in an application, if there are multiple labels around the field, UFT One uses the one that seems most logical for the object's identification.

However, if you decide to use a different label in your object description, UFT One still identifies the object.

Example: When detecting objects in an application, a button is associated with the text on the button, but a field is associated with its label, as opposed to its content.

If a field has multiple labels and UFT One chooses one for detection, UFT One will still identify this field correctly when running a test step that uses a different label to describing the field.

In some cases, UFT One's AI combines multiple text strings that are close to each other into one text string to identify one object.

You can edit the combined string and leave just one to use for object identification. Make sure to remove a whole string and not part of it.

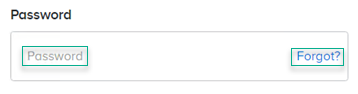

Example:

For the text box below, UFT One's AI combines Password and Forgot? into one string to identify the object and adds the following step code to your test script:

AIUtil("text_box", "Password Forgot?").Type

You can remove a whole string from your script and change the code to the following without causing test failure:

AIUtil("text_box", "Password").Type

Configure text recognition options

Customize text recognition options to reach optimal text recognition on your application. You can set most of these options globally, in Tools > Options > GUI Testing > AI Object Detection > OCR). See AI Object Detection Pane (Options Dialog Box > GUI Testing Tab).

In addition, you can add AIRunSettings steps to your test to set text recognition options. The settings are effective until you change them or until the end of the test run. For details, see the AIRunSettings Object in the UFT One Object Model Reference for GUI Testing.

Use the UFT One text recognition settings

Instruct AI object identification to use the UFT One text recognition settings. Depending on your UFT One configuration, this enables you to use Google or Baidu OCR cloud services or other UFT One OCR engines.

If you use this option, the global UFT One text recognition settings take effect. See Configure text recognition settings.

Text recognition in multiple languages

To enable UFT One's AI Object Detection to recognize text in languages other than English, select the relevant OCR languages. This setting is ignored if you selected to use the UFT One OCR settings.

Exact text matching when identifying objects using AI

AI-based object identification uses AI algorithms for text matching. AI text matching may consider spelling variations and words with similar meaning to be matches.

If necessary, you can temporarily instruct UFT One to look exactly for the specified text when identifying AI test objects. Add an AIRunSettings step to your test to set the text matching method.

This option is not available as a global option.

Note:

- The text in the application is identified using OCR. If the OCR is inaccurate, AI text matching may be more successful than exact text matching.

- Exact text matching is case-insensitive.

Consider UI control borders

Text strings close to each other on a screen may not be related if they belong to different UI controls. For example, you may want text in different table cells identified as separate strings, even if they look like one long string on the screen.

The option Take UI control borders into account when identifying text (selected by default) instructs UFT One to separate such strings.

Trim noise from your strings

Sometimes, icons, check marks, or other UI elements look like letters and add "noise" to the identified text strings. The option Trim noise characters (selected by default), tells UFT One to remove from the identified strings any characters identified in unexpected areas or controls.

Note: Noise characters are trimmed only if they are separated from the identified text string by a space.

Use regular expressions

When describing text to use for object identification, you can use a regular expression. This way, you can provide a pattern that the object's text should match, instead of specifying the exact text.

This helps identify dynamic text in your application, such as the total price in the shopping cart, or the number of items you have in your inventory.

To use a regular expression, use an AIRegex Object in the AIText parameter of the AIUtil.AIObject or AIUtil.FindTextBlock properties.

For details about the regular expressions supported in UFT One, see the Regular expression characters and usage options.

To make sure your regular expression identifies the right text, you can use the Tools > Regular Expression Evaluator. For details, see Regular Expression Evaluator.

Note: AI-based object identification uses Python regular expressions, while other UFT One features use VBScript regular expressions. For basic expressions, these should be similar. If the expression you create using the resources mentioned above does not meet your requirements, look into the details of Python regular expressions.

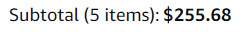

Example: When shopping on Amazon, we want to find the total price of our purchase, which appears twice on the shopping cart page, and looks like this:

The following test uses a regular expression to find this text and print it:

AIUtil.SetContext Browser("creationtime:=0") 'Set the context for AI

Set regex = AIRegex("Subtotal \(\d item\): \$[\d.]+")

print AIUtil.FindTextBlock(regex, micFromTop, 1).GetText

When using regular expressions with micAIMatch, a certain level of deviation from the specified text pattern is accepted. Therefore, in the example above, the Subtotal string is returned even though it says 'items' in plural, and the regular expression has a singular 'item'.

Identify objects by relative location

UFT One must be able to identify an object uniquely to run a step on the object. When multiple objects match your object description, you can add the object location to provide a unique identification. The location can be ordinal, relative to similar objects in the application, or proximal, relative to a different AI object, considered an anchor.

A proximal location may help create more stable tests that can withstand predictable changes to the application's user interface. Use anchor objects that are expected to maintain the same relative location to your object over time, even if the user interface design changes.

The AIUtil object identification methods now use location and locationData arguments in which you can provide information about the object's location. See AIObject, FindText, and FindTextBlock.

To describe an object's ordinal location

Provide the following information:

-

The object's occurrence, is it first, second, or third.

-

The orientation, the direction in which to count occurrences:

FromLeft,FromRight,FromTop,FromBottom.

For example, AIUtil("button", "ON", "FromLeft", 2).Click clicks the second ON button from the left in your application.

To describe an object's location in proximity to a different AI object

Provide the following information:

-

The description of the anchor object.

The anchor must be an AI object that belongs to the same context as the object you are describing.

The anchor can also be described by its location.

-

The direction of the anchor object compared to the object you are describing:

WithAnchorOnLeft,WithAnchorOnRight,WithAnchorAbove,WithAnchorBelow.

UFT One returns the AI object that matches the description and is closest and most aligned with the anchor, in the specified direction.

For example, this test clicks on the download button to the right of the Linux text, below the Latest Edition text block:

Set latestEdition = AIUtil.FindTextBlock("Latest Edition")

Set linuxUnderLatestEdition = AIUtil.FindText ("Linux", micWithAnchorAbove, latestEdition)

AIUtil("button", "download", micWithAnchorOnLeft, linuxUnderLatestEdition).Click

Note: When you identify an object by a relative location, for example, add a relation to the object in a recording session or in the AI Object Inspection window, the application size must remain unchanged in order to successfully run the test script.

Describe a control using an image

If your application includes a control type that is not supported by AI object identification, you can provide an image of the control that UFT One can use to identify the control. Specify the class name that you want UFT One to use for this control type by registering it as a custom class.

AIUtil.RegisterCustomClass "door", "door.PNG"

AIUtil("door").Click

For more details, see the AIUtil.RegisterCustomClass method in the UFT One Object Model Reference for GUI Testing.

After you register a custom class, you can use it in AIUtil steps as a control type:

AIUtil("<Myclass>")AIUtil("<Myclass>", micAnyText, micFromTop, 1)AIUtil("<Myclass>", micAnyText, micWithAnchorOnLeft, AIUtil("Profile"))

You can even use it to describe an anchor object, which is then used to identify other objects in its proximity:

AIUtil("profile", micAnyText, micWithAnchorOnLeft, AIUtil("<Myclass>") )

Note:

- You cannot use a registered class as an anchor to identify another registered class by proximity.

- The image you use to describe the control must match the control exactly.

Verify object identification

To increase the test run success rate, UFT One verifies the object identification before performing the operation. This is useful when objects in the application change locations during run time, for example, they are covered up by ads or scrolled to a new location. UFT One performs the operation on the new object location if it finds that the object moved.

If verification cannot locate the identified object, UFT One performs the operation at the location of the originally identified object. A warning message is displayed in the run result report.

By default, object identification verification is enabled for non-mobile contexts and disabled for mobile contexts. You can change the context settings in Tools > Options > GUI Testing > AI Object Detection.

Note: The added verification affects the speed of the test run.

To enable or disable object identification verification for a specific test run, use the AIRunSettings.VerifyIdentification object. See the AIRunSettings Object in the UFT One Object Model Reference for GUI Testing.

Automatic scrolling

When running a test, if the object is not displayed in the application but the application screen is scrollable, UFT One automatically scrolls further in search of the object. After an object matching the description is identified, no further scrolling is performed. Identical objects displayed in subsequent application pages or screens will not be found.

UFT One scrolls similarly when running a checkpoint that requires that an object not be displayed in the application. The checkpoint passes only if the object is not found even when scrolling to the next pages or screens.

By default, UFT One scrolls down twice. You can customize the direction of the scroll and the maximum number of scrolls to perform, or turn off scrolling if necessary.

-

Globally customize the scrolling in Tools > Options > GUI Testing > AI Object Detection, or using a UFT One automation script. See AI Object Detection Pane (Options Dialog Box > GUI Testing Tab).

-

Customize the scrolling temporarily within a test run using the AIRunSettings.AutoScroll object. See the AIRunSettings Object in the UFT One Object Model Reference for GUI Testing.

Note: You can also add steps within your test to actively scroll within the application. For details, see AIUtil.Scroll and AIUtil.ScrollOnObject in the UFT One Object Model Reference for GUI Testing.

Update local AI Object Models

UFT One's AI engine uses AI Object Models to facilitate AI-based testing activities. AI Object Models are installed and activated automatically when you install and enable UFT One's AI Object Detection.

New AI Object Models are periodically made available online on the AppDelivery Marketplace. A new model may support controls more accurately, or even identifying additional controls. When a new model is available, you can update the AI Object Model you are using locally without waiting for the next UFT One upgrade.

If necessary, you can revert back the model you used before the update.

Note: If you are using a remote AI Object-Detection service, updating the local AI Object Model does not affect AI object identification.

If a newer model than the one you are using is available, a notification is displayed in the lower right corner of your desktop when you open UFT One. You can update the local AI Object Model from the UFT One user interface or from a command line.

To update the AI Object Model from the UFT One user interface

-

Prerequisite: In Tools > Options > GUI Testing > AI Object Detection > Model, configure the Marketplace connection information.

See AI Object Detection Pane (Options Dialog Box > GUI Testing Tab).

-

Select AI > AI Object Model Update in the toolbar.

In the AI Object Model Update dialog box that opens, you can see the models you are using and available models you can download. The Active and Latest available models for both web and mobile contexts are labeled as such. The Base model label marks the model version that is provided by default with the UFT One version you are using.

-

Select an AI Object Model version and click Download.

The Status column of the model shows the download progress.

The download may take some time. You can close the dialog box and continue with your work. When the download is complete, a notification is displayed in the lower right corner of your desktop.

-

Click Activate model.

After the new model is activated, it is used immediately by UFT One's AI engine. The next time you open the AI Object Model Update dialog box, this model is labeled active.

To update the AI Object Model from a command-line interface

To update the active AI Object Model from a command line, run the ModelInstallApp.exe command. This is designed for users who have no network connection on UFT One machines. Download the model to one computer and update for all UFT One machines.

Preparation

-

Download the ModelInstallApp.zip file from Marketplace and extract its content.

-

In Marketplace, locate the model you want to activate. Download the model and configuration zip files to a local folder.

Syntax

Run the following syntax in the command line tool:

ModelInstallApp.exe --model <Model zip file> --config <config zip file>

See also:

See also: